- Welcome to Our Lab

- +61 7 3138 7195

- m1.haque[at]qut.edu.au

Driving Simulator

CARRS-Q Advanced Driving Simulator is located at the Queensland University of Technology (QUT). This high fidelity simulator consisted of a complete car with working controls and instruments surrounded by three front-view projectors providing 180-degree high resolution field view to drivers. Wing mirrors and the rear view mirror were replaced by LCD monitors to simulate rear view mirror images. Road images and interactive traffic were generated at life size onto front-view projectors, wing mirrors and the rear view mirror at 60 Hz to provide photorealistic virtual environment. The car used in this experiment was a complete Holden Commodore vehicle with an automatic transmission. The full-bodied car was rested on a 6 degree-of-freedom motion base that could move and twist in three dimensions to accurately reproduce motion cues for sustained acceleration, braking manoeuvres, cornering and interaction with varying road surfaces. The simulator was also capable of producing realistic forces experienced by drivers through the steering wheel while they were negotiating curves. The simulator used SCANeR™ studio software with eight computers linking vehicle dynamics with the virtual road traffic environment. The audio system of the car was linked with the simulator software so that it could accurately simulate surround environment sounds for engine noise, external road noise and sounds for other traffic interactions, and thus further enhancing the realism of the driving experience. Driving performances data like position, speed, acceleration and braking were recorded at rates up to 20 Hz.

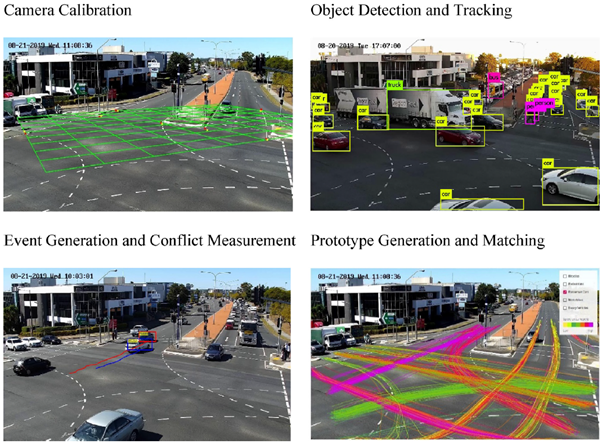

Automated Video Data Processing

Traffic conflicts identified through automated video processing are often linked with crashes using methods to establish a correlation between non-crash and crashed based approaches. Raw video footage obtained from the intersections is processed using an Artificial Intelligence (AI)-based video analysis platform developed at the Queensland University of Technology. Automated Video Data Processing method involves six main procedures: camera calibration, object detection and tracking, prototype generation, prototype matching, event generation, and conflict identification.

The first step in this system includes camera calibration, which yields camera parameter estimates for positional analysis of road users. This step is required to translate the three-dimensional real world into two-dimensional image space in cameras. The next steps involved road user detection and tracking across frames. The approach used the YOLOv3 algorithm for object detection in the traffic scene and then the Deep-SORT algorithm to track all the road users (motorized as well as non-motorized) for greater efficiency in the object detection and tracking module. The extracted vehicle trajectories are then analyzed for estimating conflict indicators for conflict events of interest for further analysis.